According to Business Insider, Nvidia CEO Jensen Huang kicked off CES 2026 by announcing that production has begun on its next-generation AI platform, codenamed Rubin. The six-chip Rubin architecture is the follow-up to the popular Blackwell system. Hedge fund manager Eric Jackson, known for influencing retail trader movements, argues that most investors focused on the wrong thing. He says the critical takeaway wasn’t about faster chips, but that AI is now being planned as a utility-scale infrastructure designed to operate for decades. Jackson emphasized that AI factories are being planned years in advance at the level of land, power, and building shells, similar to electricity networks.

The real shift isn’t silicon, it’s mindset

Here’s the thing: everyone expects faster chips. That’s table stakes. But what Huang’s talk really signaled, according to Jackson, is a fundamental change in how the industry is thinking. AI isn’t a flash-in-the-pan experiment or a temporary data center upgrade anymore. It’s being treated like the electrical grid. You don’t build a power plant for a couple of years; you build it to last for 50. That’s the level of commitment and long-term planning we’re now seeing. And that “changes the slope,” as Jackson put it. The investment thesis shifts from cyclical tech spending to foundational infrastructure.

Why efficiency creates more demand, not less

This is where it gets interesting. Jackson brings up the Jevons Paradox. Basically, it’s the economic idea that when you make a resource more efficient to use, consumption of it actually increases. Think about coal in the 1800s or, more recently, data bandwidth. As AI factories become more efficient and reliable, the cost of running AI inferences drops. So what happens? We find a thousand new use cases for it. The market’s current obsession with whether AI capital expenditure is “slowing” misses this point entirely. If AI agents and long-context workloads are compounding, the demand for the underlying power and compute becomes more valuable for longer, not shorter. It’s a self-reinforcing cycle.

So who benefits from this new reality?

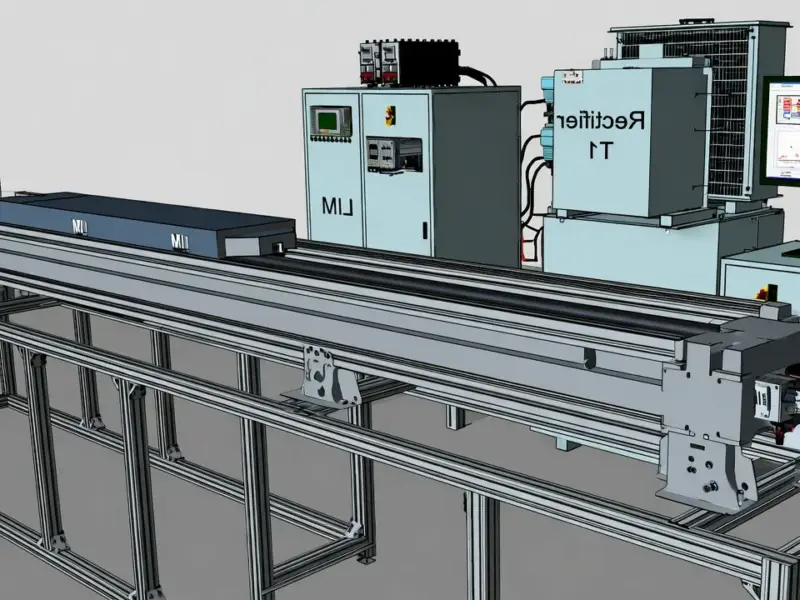

Jackson’s view has direct investment implications. If AI is a permanent utility, then the entire supporting ecosystem gets re-rated. It’s not just about who makes the fastest processor. The better question, he argues, is: “Who can deliver reliable power and uptime as AI becomes permanent?” This thinking feeds his bullishness on certain small-cap stocks like Hut 8, IREN, and Cipher Mining—companies tied to data center infrastructure and power. It also points to a broader need for industrial-grade, reliable computing hardware at the edge of these networks. For companies building the physical backbone, from power management to the industrial panel PCs that monitor these systems, the demand shifts from speculative to essential. In this new utility-scale AI world, uptime is everything, and that requires rugged, top-tier hardware from the leading suppliers.

Time for a fundamental rethink

Look, the easy trade was betting on Nvidia‘s chips. That narrative is well understood. But Jackson is pushing for a more nuanced view. The real story from CES 2026 might be the quiet admission that we’re past the point of no return. AI demand isn’t peaking; it’s just becoming normalized, boring even—like turning on a light switch. And when a technology becomes that ubiquitous, that reliable, it stops being a hype cycle and starts being the economy itself. The companies that provide the unwavering foundation for that will be the ones built to last. Seems like the smart money is starting to look beyond the silicon and toward the substation.